What Is GLM-5.1?

On April 7, 2026, Z.AI (Zhipu AI, listed on the Hong Kong Stock Exchange with a $52.83B market cap) released GLM-5.1 — a 754-billion parameter Mixture-of-Experts model under the MIT License with weights on HuggingFace (zai-org/GLM-5.1).

Key specs:

- Parameters: 754B (Mixture-of-Experts)

- Context window: 200K tokens

- Max output: 128K tokens

- License: MIT — fully open source, commercially permissive

- Autonomous duration: Up to 8 hours of continuous work

GLM-5.1 is not a chatbot. It is not a reasoning model. It is a worker — built specifically for agentic engineering tasks that require sustained, autonomous execution over long periods.

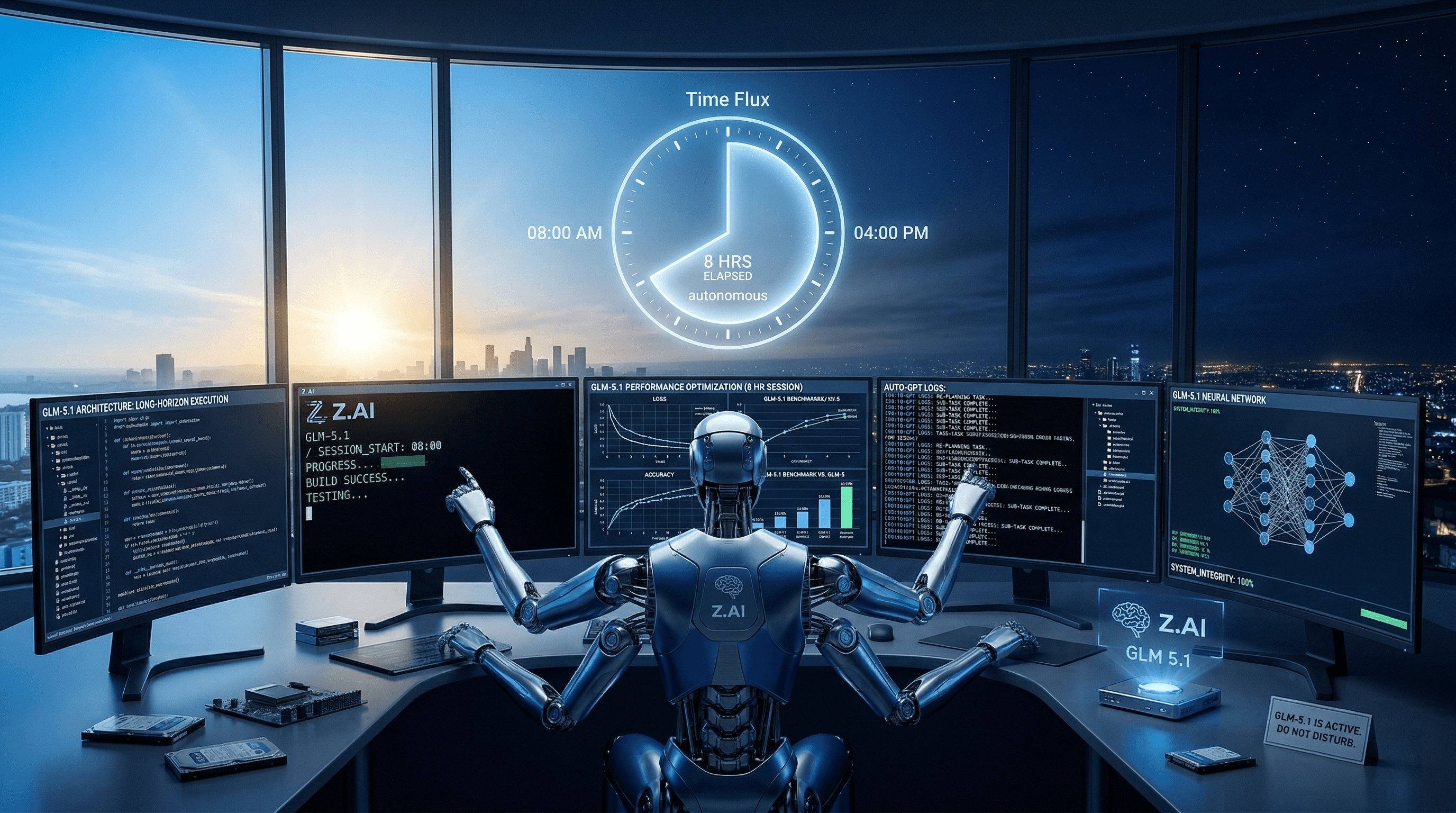

The 8-Hour Autonomous Agent

Previous frontier models could sustain about 20 autonomous steps by the end of 2025. GLM-5.1 handles 1,700 steps in a single session. A Z.AI leader stated on X: 'autonomous work time may be the most important curve after scaling laws.'

The flagship demo was remarkable: GLM-5.1 built a complete Linux desktop environment from scratch in 8 hours — including a file browser, terminal emulator, text editor, system monitor, and functional games. All without human intervention.

During benchmark testing, the model performed 600+ optimization iterations and 6,000+ tool calls. Unlike other models that exhaust their repertoire early, GLM-5.1 keeps finding new optimization strategies throughout long sessions.

This introduces a new metric for evaluating AI models: productive autonomy — not chat quality, not reasoning speed, but hours of useful work.

Benchmarks: #1 Open Source, Competitive With Every Frontier Model

GLM-5.1 benchmarks tell a clear story:

| Benchmark | Score | Rank |

|---|---|---|

| SWE-Bench Pro | 58.4% | #1 overall |

| AIME 2026 | 95.3 | Near-perfect math reasoning |

| GPQA-Diamond | 86.2 | Strong graduate-level QA |

| Terminal-Bench 2.0 | 63.5 (66.5 with Claude Code) | Real-world terminal tasks |

| CyberGym | 68.7 | Up from GLM-5's 48.3 |

| MCP-Atlas | 71.8 | MCP integration |

GLM-5.1 ranks #1 open-source and #3 globally across the combined SWE-Bench Pro, Terminal-Bench, and NL2Repo benchmarks.

The SWE-Bench Pro result is the headline: this is the first time an open-source model has taken the top spot on the most respected real-world coding benchmark, beating GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro.

GLM-5.1 vs Frontier Models: Full Comparison

Here is how GLM-5.1 stacks up against current frontier models:

| Model | SWE-Bench Pro | Autonomous Duration | Context | License | Pricing (in/out per M) | Local Deploy |

|---|---|---|---|---|---|---|

| GLM-5.1 | 58.4% | 8 hours | 200K | MIT | $1.40 / $4.40 | Yes |

| Claude Opus 4.6 | ~55% | ~1 hour | 1M | Proprietary | $15 / $75 | No |

| GPT-5.4 | ~56% | ~2 hours | 256K | Proprietary | $15 / $60 | No |

| Gemini 3.1 Pro | ~54% | ~1.5 hours | 2M | Proprietary | $1.25 / $5 | No |

| DeepSeek V4 | ~52% | ~1 hour | 128K | MIT | $0.55 / $2.19 | Yes |

The key takeaway: GLM-5.1 beats every model on SWE-Bench Pro while being fully open-source and 10x cheaper than Claude or GPT-5.4. For enterprises that need data privacy through self-hosting, GLM-5.1 and DeepSeek V4 are the only viable options.

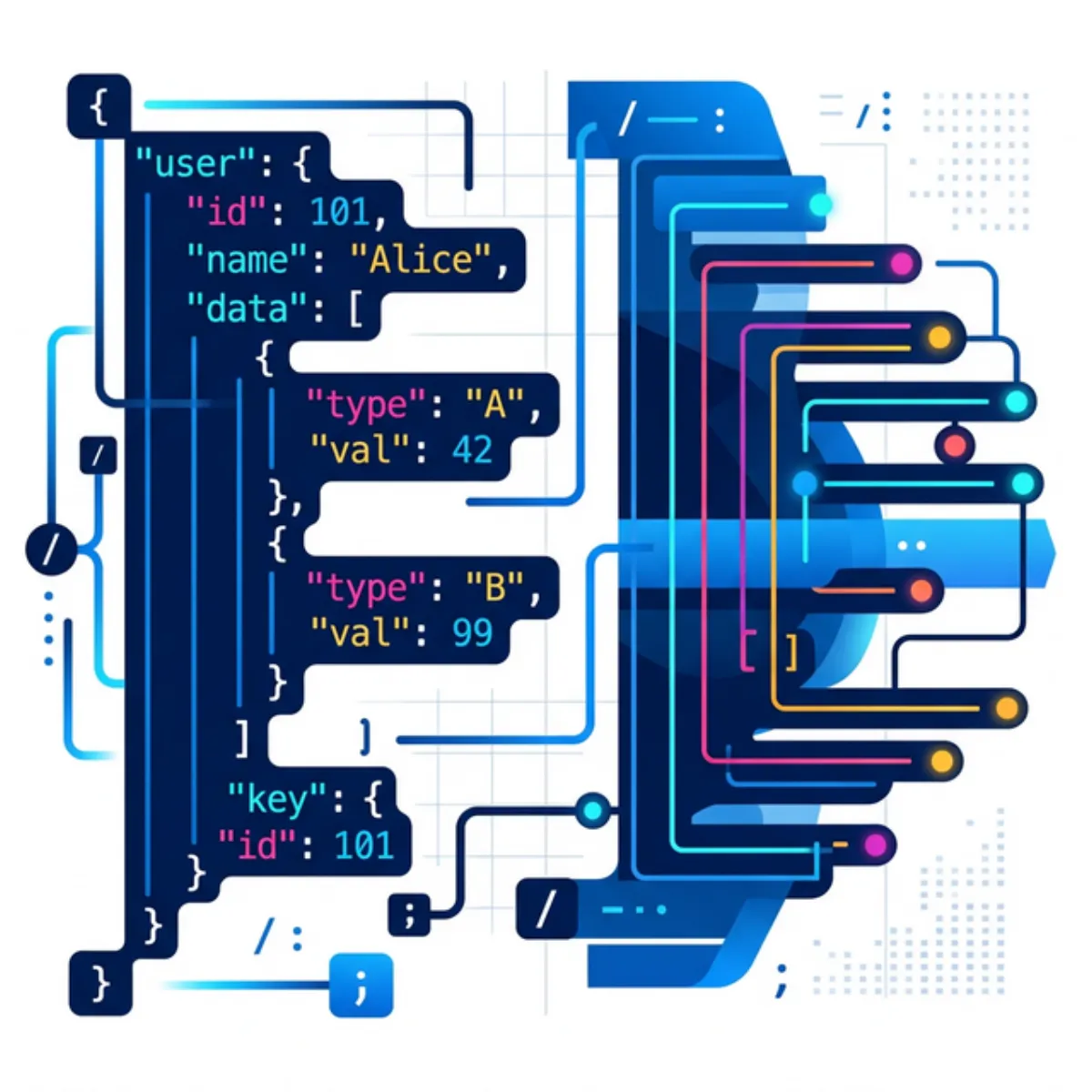

How to Use GLM-5.1: Developer Setup Guide

API Access via BigModel

The fastest way to get started is through BigModel (bigmodel.cn):

- Model name: glm-5.1

- Input: $1.40 per million tokens

- Output: $4.40 per million tokens

- Peak hours (14:00–18:00 UTC+8): 3x quota

- Off-peak: 2x quota (promotional 1x through April 2026)

GLM Coding Plan

Z.AI offers a dedicated GLM Coding Plan at $10/month that works with all major coding agents. Available in Max, Pro, and Lite tiers.

With Claude Code

Update your ~/.claude/settings.json:

{

"env": {

"ANTHROPIC_DEFAULT_SONNET_MODEL": "glm-5.1",

"ANTHROPIC_DEFAULT_OPUS_MODEL": "glm-5.1"

}

}With OpenClaw

Add GLM-5.1 to your ~/.openclaw/openclaw.json models configuration and set it as the primary model. This is particularly relevant given the recent Anthropic ban on OpenClaw users — GLM-5.1 offers a powerful open-source alternative at a fraction of the cost.

Local Deployment

GLM-5.1 weights are on HuggingFace at zai-org/GLM-5.1 under MIT License. Supported frameworks:

- vLLM v0.19.0+ — most popular for production

- SGLang v0.5.10+ — optimized for structured generation

- xLLM v0.8.0+ — lightweight inference

- Transformers v0.5.3+ — research and prototyping

Via OpenRouter / Requesty

GLM-5.1 is available immediately on both OpenRouter and Requesty for unified API access across multiple models.

Why GLM-5.1 Matters for the Industry

GLM-5.1 matters beyond its benchmarks for several critical reasons:

Open source beating proprietary. This is the first time an MIT-licensed model has taken #1 on SWE-Bench Pro. It validates the open-source approach and puts pressure on Anthropic, OpenAI, and Google to justify their premium pricing.

Productive autonomy as a metric. GLM-5.1 shifts the conversation from 'how smart is the model' to 'how long can it work independently.' 8 hours of autonomous coding is not incremental — it is a qualitative shift.

Enterprise self-hosting. MIT License means enterprises can deploy on their own infrastructure with full data privacy. No vendor lock-in, no data leaving the organization.

Existing tooling support. GLM-5.1 works with Claude Code, OpenClaw, Cline, and other agent frameworks. No new ecosystem to learn.

Trained on Huawei Ascend chips. GLM-5.1 does not require NVIDIA hardware — geopolitically significant and opens deployment options for organizations that cannot source NVIDIA GPUs.

OpenClaw alternative. For the 135,000+ OpenClaw users who lost affordable Claude access after Anthropic's ban, GLM-5.1 represents a powerful alternative that aligns with the open-source philosophy. Read our full coverage of the Anthropic-OpenClaw situation.

The trajectory is clear: open-source AI models are converging with proprietary frontier models on capability while offering dramatically better economics and data sovereignty. GLM-5.1 is the strongest evidence yet that the future of agentic AI may be open source.

At DevPik, we believe the best tools are the ones you control — whether it's an open-source AI model or a browser-based developer tool. Try our 40+ free tools including JSON tools and text generators.